Does engagement actually matter?

Mar 31, 2015

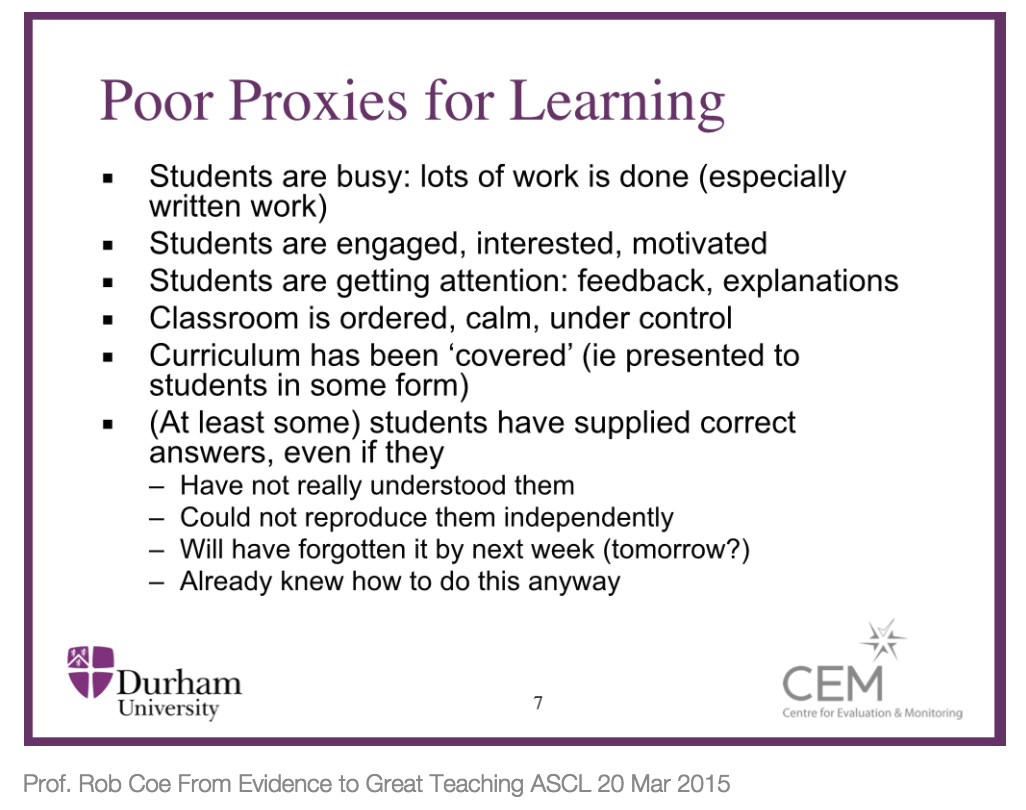

Suggesting that student engagement might actually be a bad thing tend to get certain people's dander up. There was a mild spat recently about Rob Coe reiterating that engagement was a 'poor proxy' for learning.

Carl Hendrick unpicked the problem further:

This paradox is explored by Graham Nuthall in his book ‘The Hidden Lives of Learners,’ (2007) in which he writes:Even this mild critique annoyed some spectators, but now it turns out that engagement might actually be counter-productive. According to a new report for The Brown Centre by Tom Loveless, in which he takes apart the 2012 PISA findings to demonstrate that countries that do well on student motivation do poorly on maths attainment and vice versa. Contrary to every intuition, student engagement and motivation may actually be retarding learning.

“Our research shows that students can be busiest and most involved with material they already know. In most of the classrooms we have studied, each student already knows about 40-50% of what the teacher is teaching.” p.24

Nuthall’s work shows that students are far more likely to get stuck into tasks they’re comfortable with and already know how to do as opposed to the more uncomfortable enterprise of grappling with uncertainty and indeterminate tasks... The other difficulty is the now constant exhortation for students to be ‘motivated’ (often at the expense of subject knowledge and depth) but motivation in itself is not enough. Nuthall writes that:

“Learning requires motivation, but motivation does not necessarily lead to learning.” p.35

Motivation and engagement and vital elements in learning but it seems to be what they are used in conjunction with that determines impact. It is right to be motivating students but motivated to do what? If they are being motivated to do the types of tasks they already know how to do or focus on the mere performing of superficial tasks at the expense of the assimilation of complex knowledge then the whole enterprise may be a waste of time.

Loveless is a cautious analyst and is at pains to point out the risk of jumping to hasty conclusions on flimsy correlational evidence:

The analytical unit is especially important when investigating topics like student engagement and their relationships with achievement. Those relationships are inherently individual, focusing on the interaction of psychological characteristics. They are also prone to reverse causality, meaning that the direction of cause and effect cannot readily be determined. Consider self-esteem and academic achievement. Determining which one is cause and which is effect has been debated for decades. Students who are good readers enjoy books, feel pretty good about their reading abilities, and spend more time reading than other kids. The possibility of reverse causality is one reason that beginning statistics students learn an important rule: correlation is not causation. p 28

Data reveal that several countries noted for their superior ranking on PISA—e.g., Korea, Japan, Finland, Poland, and the Netherlands— score below the U.S. on measures of student engagement. Thus, the relationship of achievement to student engagement is not clear cut, with some evidence pointing toward a weak positive relationship and other evidence indicating a modest negative relationship. p 27

Nations with the most confident math students tend to perform poorly on math tests; nations with the least confident students do quite well. p 28

Loveless points out that within county analysis reveals something concealed by between country analysis:

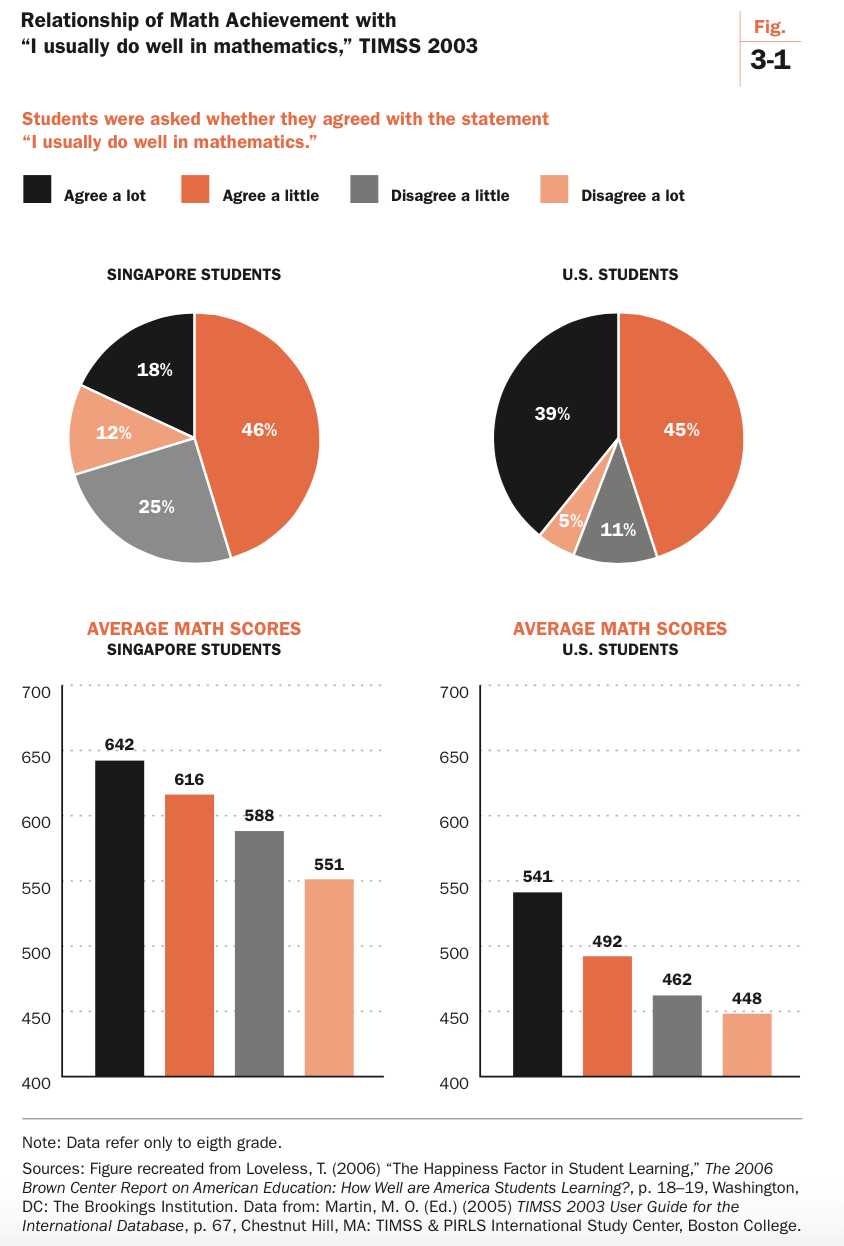

In Singapore, highly confident students score 642, approximately 100 points above the least-confident students (551). In the U.S., the gap between the most- and least- confident students was also about 100 points— but at a much lower level on the TIMSS scale, at 541 and 448. p.29But, "the least-confident Singaporean eighth grader still outscores the most-confident American, 551 to 541."

He goes on to show that "enjoying math is not positively related to math achievement. Nor is looking forward to one’s math lessons."

Now none of this proves that engagement and intrinsic motivation are actually bad for attainment, but serious doubts are cast on policy measures which seek to boost student engagement in the belief that results will improve. Suitably cautious, Loveless says, "PISA provides, at best, weak evidence that raising student motivation is associated with achievement gains. Boosting motivation may even produce declines in achievement."

The report doesn't really seek to unpick why all this might be so, but there does seem to be a link with the points Nuthall's made above: what students enjoy may not actually involve much in the way of learning. Hard work often isn't fun. If Rob Coe is right [UPDATE: I no longer think he's quite right] that "learning happens when people have to think hard" then it's small wonder that activities that prioritise engagement and motivation over 'thinking hard' don't actually result in long-term retention and transfer between contexts.

There's also compelling evidence that we're pretty terrible at judging when we learn best. How would you go about learning a new skill, memorising dates, or learning how to solve an equation? Most of us tend to review the basics, complete a few related practice exercises, reach an acceptable level of proficiency, and then move along to the next topic. This is approach is known as blocking, or massed practice. Massed practice allows us to focus on learning one topic or skill area at a time. The topic or skill is repeatedly practised for a period of time and then you move onto another skill and repeat the process. Interleaving practice, on the other hand, involves working on multiple skills in parallel.

Even though laboratory tests and classroom trials have demonstrated interleaving clearly a more effective way to learn than massing practice, the fact that performance is lower during instruction fools us into believing that it must be ineffective. Even showing the evidence of improved test results can just lead to a Backfire Effect with over 65 percent of students simply discounting the evidence and continuing to do what they’ve always done. Part of the problem is that by boosting students' performance during instruction and then blocking practice we encounter the ‘illusion of knowing’ and that warm, fuzzy feeling of cognitive ease. Time and again, we prefer what we believe to be true than the uncomfortable realities of what is actually true.

To sum up, I'm not saying engagement and motivation don't matter at all - clearly they are important in all sorts of contexts - but the idea that there is any kind of direct link to achievement appears to be dubious. If you want to engage students because you want them to be more engaged, fine. But if you believe that engagement will automatically lead to better results you may well be mistaken.

The Learning Spy Substack is a sharp, provocative dispatch from the front lines of education, where ideas are tested, myths are challenged, and nothing is taken for granted.

Join me on Substack