How do we know pupils are making progress? Part 1: The madness of flight paths

Mar 23, 2019

Schools are desperate to find ways to predict students' progress from year to year and between key stages. Seemingly, the most common approach to solving this problem is to produce some sort of 'flight path'. The internet is full of such misguided attempts to do the impossible.

Predicting a students' progress is a mug's game. It can't be done. At the level of nationally representative population sample we can estimate the likelihood of someone who is measured at performing at one level attaining another level, but this is meaningless at the level of individuals. It should therefore be obvious that using flight paths to inform assessment or guide teaching compounds an error. So, why are schools using them?

The answer is, I think, threefold. Firstly, many school leaders still believe someone somewhere requires them to do this. Second, some actually believe it's possible or desirable to make a meaningful statement about an individual students' chances of achieving a prediction. Third, there's a belief that reports to parents, governors, inspectors or some other shadowy party must be based on numbers. Let's address each of these in turn.

Do I have to predict students' grades?

No. Here's a video of Matthew Purves, Deputy Director, Schools, explaining why Ofsted will be ignoring pointless data:

https://www.youtube.com/watch?v=zcrp5N6c334

He says,

Data should not be king. Too often, vast amounts of teachers' and leaders' time is absorbed into recording, collecting and analysing excessive progress and attainment data within schools. And that diverts their time away from what they entered the profession to do, which is to be educators. And, in fact, with much of that progress and attainment data, they and we can't be confident that it's valid and reliable information. ... inspectors will not look at school's internal progress and attainment data.To be clear, no one is going to prevent you from collecting data, but inspector won't be interested in it.

Is it possible or desirable to make a meaningful statement about an individual students' chances of achieving a predicted grade?

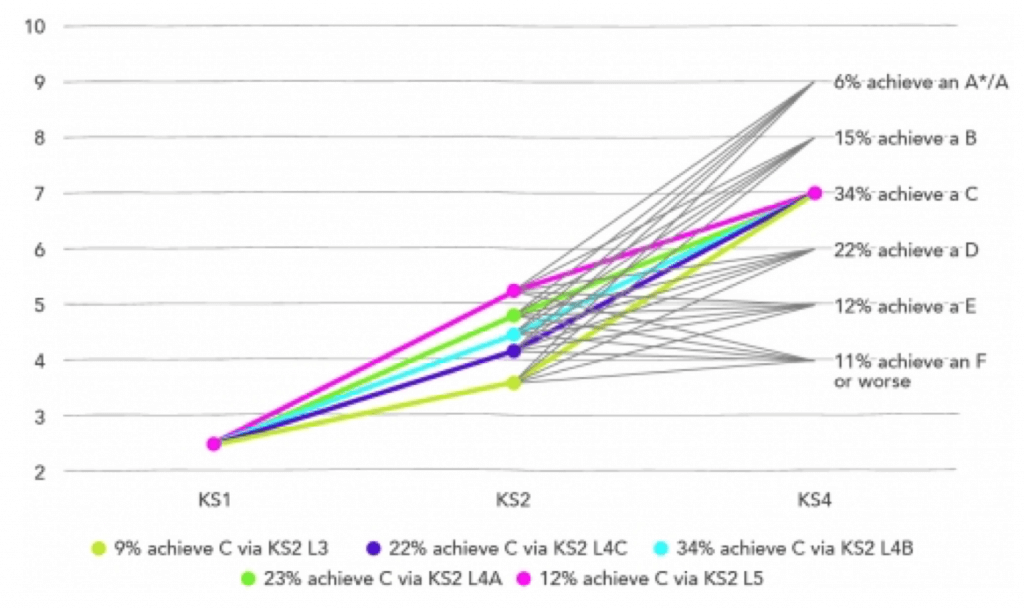

No. As Mike Treadaway demonstrated on the Education Datalab blog, measuring pupil progress involves more than taking a straight line. As you can see from the graph below, children attaining identical scores in KS1 go on to achieve widely varying results at the end of KS2, and by the time they're 16, the best we can say is that 66% of them will achieve a grade other than the one they were predicted to get. And of those 34% who do hit their prediction, “More children get to the ‘right’ place in the ‘wrong’ way, than get to the ‘right’ place in the ‘right’ way.”

These predictions are based on data derived from standardised national examinations. As such, we can make empirical statements about their reliability and the validity of our inferences. Reliability varies from subject to subject but for more subjectively assessed subject areas (English, history etc.) reliability might be as low as 0.6. Essentially, what this means is that 40% of papers would have received a different mark if marked by a different examiner. And there are also issues about whether a student would receive the same mark if they sat the paper on a different day. This might sound alarming but it's infinitely better than the vast majority of internal assessments conducted by schools where no one even attempts to collect data about reliability, never mind the host of other variables that might be out of kilter. What this boils down to is that internal progress and attainment data is, in most cases, a spurious combination of bias, coin tosses and post-hoc rationalisation.

Ofsted's National Director for Education, Sean Harford, has said, “Flight paths in secondary are nonsense and demotivating for pupils.” When predictions are made about individual students' performance, these prediction can become self-fulfilling. If students (or teachers) believe that they can't do better than a Grade 4, many will stop trying. Why would we ever want to convince anyone that children are less capable than they might be? As the graph above shows, prior attainment does allow us to make meaningful predictions about very large groups of pupils, but these predictions are probabilistic. They are not fate.

Do reports to parents, governors, or anyone else have to be based on progress and attainment data?

No. We've already seen that inspector will ignore such reports and there is no external requirement that parents or governors must receive reports based on made up numbers. Reporting and assessment are not the same. When it comes to knowing how their children are getting on in schools, parents have the right to receive an annual report but the form this report takes is for schools to determine. Speaking as a parent, what I want to know about my children is 1) Are they happy and safe, 2) How are they getting on in relation to the other students in the same class, and 3) Are they working hard?

If, in answer to question 2 I'm given a number derived from a flight path's flawed notion of where students should be, this just provides an illusion of knowledge. What does the number mean? If I'm given a GCSE grade, what I'm being told is that the teacher has the power to forecast the future and determine not only how well my child will do in an examination at some, possibly distant, point in the future, but that the teacher can also predict how well they're likely to do in relation to every other child who takes the exam. This is intellectually dishonest. What the teacher can tell me is how well they seem to doing in relation to their peers. For that, all I need is that they are exceeding expectations, keeping up or struggling.

How do teachers know this? Because they're informally gathering data every single lesson. When these informal impressions are turned into numbers they're imbued with mystical properties. We can starting turning then into percentages and averaging them. They take on an importance that far exceeds their status as a very poor proxy for my child's progress in a particular subject. But when they're turned into a narrative, although this is no more or less 'true' than a number, it's easier to both challenge and interpret. I can reply with a narrative of my own that maybe helps shape the teacher's understanding, and vice versa. I also know that the narrative is what the teacher reckons, their best guess. If they think things are fine, then that is subject to change. If they're concerned, there's probably some cause. All of this is far more useful than made up data.

What should you do instead?

Chief Inspector, Amanda Spielman, has said the following:

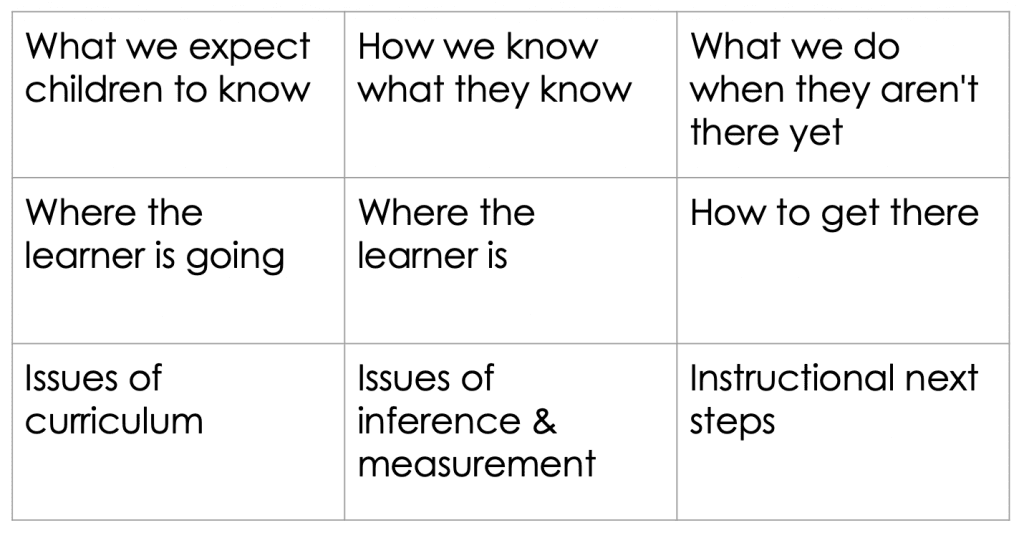

What I want school leaders to discuss with our inspectors is what they expect pupils to know by certain points in their life, and how they know they know it. And crucially, what the school does when it finds out they don’t! These conversations are much more constructive than inventing byzantine number systems which, let’s be honest, can often be meaningless.This can be boiled down to three areas:

Over my next three posts I intend to break down each of these down so that we can consign the flight path to the dustbin of failed educational ideas and go about designing a system that is genuinely useful to students, teachers, and everyone else involved in education.

Part 2: The curriculum

Part 3: Assessment

Part 4: Instruction

Acknowledgements

Thanks to Matthew Benyohai for digging out so many examples of flight paths and prompting this blog. Thanks also to Deep Ghataura who mapped Amanda Spielman's speech against the principles of AfL.

The Learning Spy Substack is a sharp, provocative dispatch from the front lines of education, where ideas are tested, myths are challenged, and nothing is taken for granted.

Join me on Substack