How do we know pupils are making progress? Part 4: Instruction

Apr 07, 2019

This is the final post in a series looking at how we can be sure that students are making progress through the curriculum.The whole purpose of knowing whether students are making progress is to be able to design appropriate instructional sequences. We may believe children are motoring through our wonderfully constructed curriculum but if empirical data reveals this not to be the case, we need to know.

If my last post I discussed the importance of being able to glean meaningful data on item difficulty by seeing how well students do on particular assessment tasks. If all students are getting certain questions right all of the time, those questions are probably too easy and it's time to increase the challenge and complexity of what's being studied. Equally, if most children are getting failing to answer tasks related to an aspect of the curriculum we've covered, then we need to consider how we intend to adjust our instruction to make sure more students can successfully answer such questions.

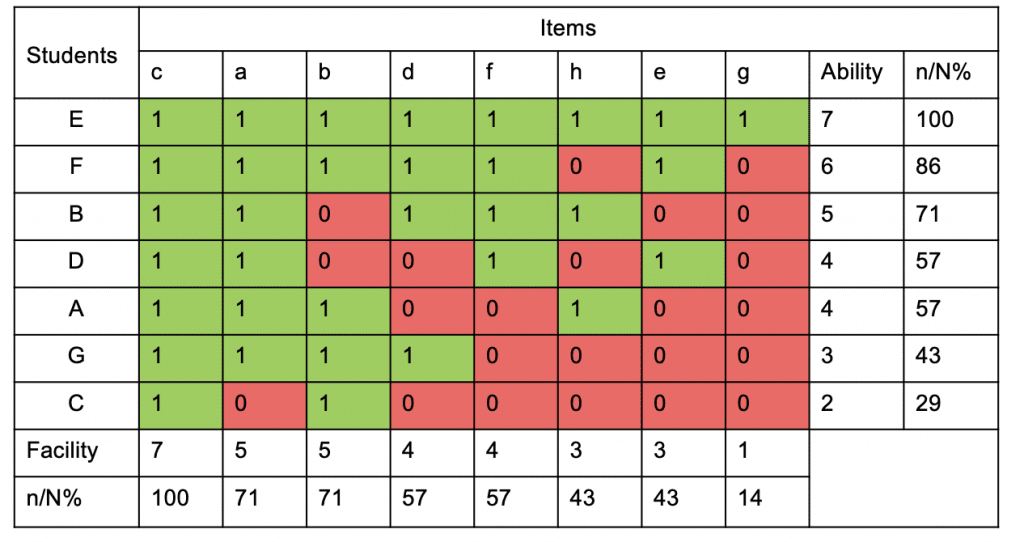

Let's return to the fictitious scalogram from the previous post:

[caption id="attachment_67371" align="aligncenter" width="1024"]

Looking at this we can infer that there's a reasonable probability that students have mastered item c and that although some students have struggled with items a and b, most seem to have got it. We will almost certainly need to return to the topics addressed by these items but we can also be reasonably sure that most students will have a secure understanding. The picture is hazier for items d and f; although a majority of students answered them correctly, we ought to be aware that those that did get the questions right may have done so with a fair trailing wind. We should definitely not assume that those students that answered the items correctly would be able to answer similar questions in the future. This is a clear signpost that future teaching needs to consolidate on the topics represented by these items. Finally, looking at items h, e and g, it should be clear that these areas of the curriculum are not well understood and that future instruction should be based on the assumption that even those students who answered the items successfully may not do so again.[/caption]

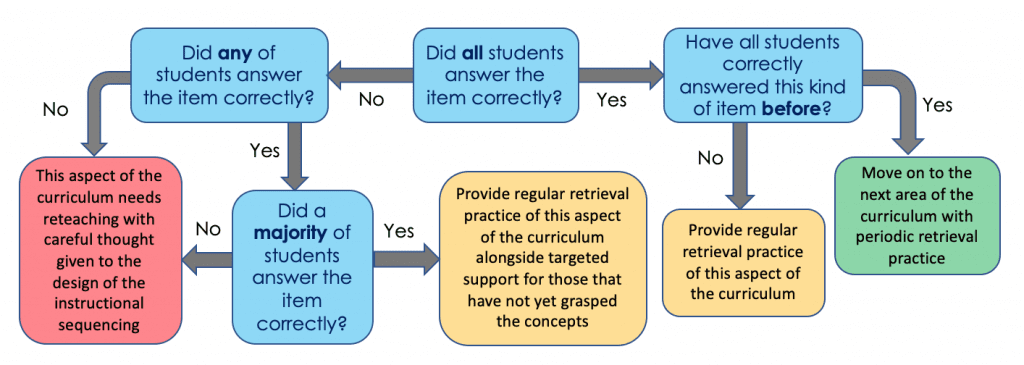

Looking at this we can infer that there's a reasonable probability that students have mastered item c and that although some students have struggled with items a and b, most seem to have got it. We will almost certainly need to return to the topics addressed by these items but we can also be reasonably sure that most students will have a secure understanding. The picture is hazier for items d and f; although a majority of students answered them correctly, we ought to be aware that those that did get the questions right may have done so with a fair trailing wind. We should definitely not assume that those students that answered the items correctly would be able to answer similar questions in the future. This is a clear signpost that future teaching needs to consolidate on the topics represented by these items. Finally, looking at items h, e and g, it should be clear that these areas of the curriculum are not well understood and that future instruction should be based on the assumption that even those students who answered the items successfully may not do so again.[/caption]For clarity, I've condensed the paragraph above into a flowchart:

[caption id="attachment_67421" align="aligncenter" width="1024"]

Instructional response flowchart[/caption]

Instructional response flowchart[/caption]What to do if students 'get it'

If we can clearly see that an area of the curriculum appears well understood, it makes sense to move on though our curriculum-as-progression-model to the next point in the sequence. However, we should always be mindful that current performance does not prove content will be retained indefinitely. In fact, we would be wise to assume that students will begin to forget content that is not being regularly retrieved. To that end, we should include spaced retrieval practice of previous learned content interleaved alongside the presentation of new content.

This retrieval practice does not have to be in the form of pen and paper test. It might feel appropriate to get students to fill in partially completed diagrams, draw concept maps, or simply answer questions. One of the simplest ways to ensure instructional sequences are constantly looping back to previously learned content is begin each lesson with four or five questions about what was studied last lesson, last week, last term, last year. The important part of this retrieval practice is not that students answer the questions right or that they get feedback on their performance but that they struggle to dredge answers back from long-term memory thereby building storage strength. If these questions are multiple choice, retrieval practice can be very efficient with students only needing to record say, 1, 2 or 3 on a mini whiteboard to indicate which answer they think is correct, and with teachers able to see at a glance how students are performing.

What to do if students definitely don't get it

If it's really clear that most students are unable to correctly answer questions on an aspect of the curriculum then it's clear something has gone wrong. It might be that the questions you asked were badly though through or poorly worded. If that's the case, make sure future assessments contain better items. But, it might also be a problem with previous instruction. Curriculum design is - or should be - an iterative process. What seemed a great idea when we planned it out can often fail to land. Sometimes, an explanation that worked wonderfully with one class falls on barren ground with another. If assessment reveals that children are not progressing through the curriculum as expected then we must remediate.

This might mean a complete overhaul of our instructional sequencing: was there something wrong with our explanations? Did we rush through worked examples without breaking processes down sufficiently? Did we take away vital scaffolding too quickly? Or did we perhaps fail to take it away at all allowing students to become dependent on it? Did we allow for enough guided practice? If we've planned for progression then it's unlikely that we will have to teach the whole thing from scratch. By examining the teaching sequence for weak links we should be able to work out if we can just add in more modelling or practice.

Additionally, we know some aspects of the curriculum will present more difficulties than others; some things are just more abstract and counter-intuitive to be easily grasped. This being the case we should ensure that these predictably tricky areas are regularly looped back to in our curriculum plan.

What to do when we're not sure whether students get it

Uncertainty is, for the most part, the only intellectually honest position to take; we can never truly know whether current performance will lead to future retention and application to new contexts. When item analysis reveals that particular areas of the curriculum are not confidently understood, or that certain students are far less clear than others this doesn't mean we have to drop everything to reteach the topic, but it does mean we should proceed with caution.

As we embark on new aspect of the curriculum we should assume that students will not necessarily grasp foundational concepts and take every opportunity to reiterate explanations and link new content to previous learned content. In addition to providing regular opportunities for retrieval practice for all students, we should also keep an especially careful eye on student we know failed to answer items correctly in previous assessments. This should not mean that we give such students a less challenging route though curriculum, or proceed at a slower pace. Instead we should keep scaffolding in place for longer whilst maintaining our high standards that they can master the essential elements of the curriculum.

*

None of this should come as much of a surprise, but I hope that by explicitly linking instruction to assessment and curriculum that some potential points of confusion might be clarified. To simplify matters further, here are the five essential points of the process once more:- Use assessment to make decisions about instructional next steps by making sure you know which items students can answer confidently and which are currently too difficult.

- Establish why students have struggled with items - is it due to poor assessment design or poor instructional design?

- If content needs re-teaching, work out which aspects of the instructional sequence need revisiting: do students need better explanation, better modelling, better scaffolding or more practice?

- When teaching new curriculum content make sure students are given regular retrieval practice of previously learned content, even if you think students have definitely mastered it.

- Don't reduce aspirations for those students who seem to be struggling; think about how to provide better scaffolding.

The Learning Spy Substack is a sharp, provocative dispatch from the front lines of education, where ideas are tested, myths are challenged, and nothing is taken for granted.

Join me on Substack