Everything we've been told about teaching is wrong, and what to do about it!

Mar 09, 2014

#Dylan Wiliam

#John Hattie

#Robert Bjork

#David Mcraney

#Christopher Chabris

#Daniel Simons

#Katherine Schultz

#PedagooLondon14

It was great to be back at the IOE for Pedagoo London 2014, and many thanks must go to @hgaldinoshea & @kevbartle for organising such a wonderful (and free!) event. As ever there's never enough time to talk to everyone I wanted to talk to, but I particularly enjoyed Jo Facer's workshop on cultural literacy and Harry Fletcher-Wood's attempt to stretch a military metaphor to provide a model for teacher improvement. As I was presenting last I found myself unable to concentrate during Rachel Steven's REALLY INTERESTING talk on Lesson Study and returned to the room in which I would be presenting to catch the end of Kev Bartle's fascinating discussion of a Taxonomy of Errors.

If you've been following the blog you may be aware that I've been pursuing the idea that learning is invisible and that attempts to demonstrate it in the classroom may be fundamentally flawed. Clearly, what with all the reading and thinking I'm putting in to this line of inquiry, I think I'm right. But then, so do we all. No teacher would ever choosing to do something they believed to be wrong just because they were told to, would they?

You are wrong!

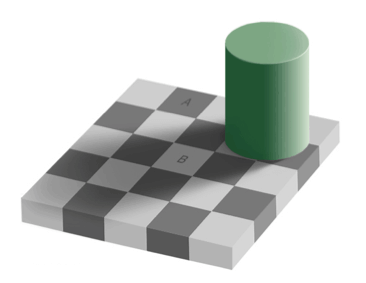

The starting point for my presentation was to address the fact that we're all wrong, all the time, about almost everything. This is a universal phenomenon: to err is human. But we only really believe that applies to everyone else, don't we? I began by posting this question: How can we trust when our perception is accurate and when it's not? Worryingly, we cannot. Take a look at this picture Contrary to the evidence of our eyes, the squares labelled A and B are exactly the same shade of grey. That's insane, right? Obviously they're a different shade. We know because we can clearly see they're a different shade. Anyone claiming otherwise is an idiot.

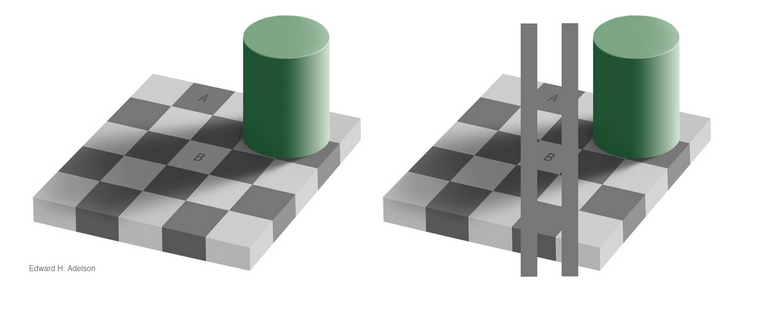

Well, no. As this second illustration shows, the shades really are the same shade.

Contrary to the evidence of our eyes, the squares labelled A and B are exactly the same shade of grey. That's insane, right? Obviously they're a different shade. We know because we can clearly see they're a different shade. Anyone claiming otherwise is an idiot.

Well, no. As this second illustration shows, the shades really are the same shade.

How can this be so? Our brains know A is dark and B is light, so therefore we edit out the effects of the shadow cast by the green cylinder and compensate for the limitations of our eyes. We literally see something that isn't there. This is a common phenomenon and Katherine Schultz describes illusions as "a gateway drug to humility" because they teach us what it is like to confront the fact that we are wrong in a non-threatening way.

Watch this video can count the number of completed passes made by players wearing white T-shirts. Try to ignore the players wearing black T-shirts.

http://www.youtube.com/watch?v=IGQmdoK_ZfY

How many passes did you count? The answer is 16, but did you see the gorilla?

Daniel Simons and Christopher Chabris's research into 'inattention blindness' reveals a similar capacity for wrongness. Their experiment, the Invisible Gorilla, has become famous. If you've not seen it before, it can be startling: just under 50% of people fail to see the gorilla. And if you'd seen it before, did you notice one of the black T-shirted players leave the stage? You did? Did you also see the curtain change from red to gold? Vanishingly few people see all these things. And practically no one sees them all and still manages to count the passes!

Intuitively we don't believe that almost half the people who first see that clip would fail to see a man in a gorilla suit walk on stage and beat his chest for a full nine seconds. But we are wrong.

But it's not usually so simple to spot where we go wrong. Our brains are incredibly skilled at protecting us from the uncomfortable sensation of being wrong. There's a whole host of cognitive oddities that prevent us from seeing the truth. Here are just a few:

How can this be so? Our brains know A is dark and B is light, so therefore we edit out the effects of the shadow cast by the green cylinder and compensate for the limitations of our eyes. We literally see something that isn't there. This is a common phenomenon and Katherine Schultz describes illusions as "a gateway drug to humility" because they teach us what it is like to confront the fact that we are wrong in a non-threatening way.

Watch this video can count the number of completed passes made by players wearing white T-shirts. Try to ignore the players wearing black T-shirts.

http://www.youtube.com/watch?v=IGQmdoK_ZfY

How many passes did you count? The answer is 16, but did you see the gorilla?

Daniel Simons and Christopher Chabris's research into 'inattention blindness' reveals a similar capacity for wrongness. Their experiment, the Invisible Gorilla, has become famous. If you've not seen it before, it can be startling: just under 50% of people fail to see the gorilla. And if you'd seen it before, did you notice one of the black T-shirted players leave the stage? You did? Did you also see the curtain change from red to gold? Vanishingly few people see all these things. And practically no one sees them all and still manages to count the passes!

Intuitively we don't believe that almost half the people who first see that clip would fail to see a man in a gorilla suit walk on stage and beat his chest for a full nine seconds. But we are wrong.

But it's not usually so simple to spot where we go wrong. Our brains are incredibly skilled at protecting us from the uncomfortable sensation of being wrong. There's a whole host of cognitive oddities that prevent us from seeing the truth. Here are just a few:

- Confirmation bias - the fact that we seek out only that which confirms what we already believe

- The Illusion of Asymmetric Insight - the belief that though our perceptions of others are accurate and insightful, their perceptions of us are shallow and illogical. This asymmetry becomes more stark when we are part of a group. We progressive see clearly the flaws in traditionalist arguments, but they, poor saps, don't understand the sophistication of our arguments.

- The Backfire Effect - the fact that when confronted with evidence contrary to our beliefs we will rationalise our mistakes even more strongly

- Sunk Cost Fallacy - the irrational response to having wasted time effort or money: I've committed this much, so I must continue or it will have been a waste. I spent all this time training my pupils to work in groups so they're damn well going to work in groups, and damn the evidence!

- The Anchoring Effect - the fact that we are incredibly suggestible and base our decisions and beliefs on what we have been told, whether or not it makes sense. Retailers are expert at gulling us, and so are certain education consultants.

- You can see learning

- Improving pupils' performance is the best way to get them to learn

- Outstanding lessons are a good thing

- Feedback is always good

- AfL is great

- Abandon the Cult of Outstanding

- Be careful about how we give feedback

- Introduce ‘desirable difficulties’

- Question all your assumptions – be prepared to ‘murder your darlings

Some books which have been both fascinating and extremely helpful in developing my thinking are:

Being Wrong: Adventures in the Margin of Error by Katherine Schultz

You Are Not So Smart by David McRaney

The Invisible Gorilla Daniel Simons & Christopher Chabris

The Learning Spy Substack is a sharp, provocative dispatch from the front lines of education, where ideas are tested, myths are challenged, and nothing is taken for granted.

Join me on Substack