How do we know if a teacher's any good?

May 09, 2015

#Graham Nuthall

#Sutton Trust

#Robert Coe

#Eric Kalenze

#Charlotte Danielson

#Jack Marwood

#Steve Higgins

Obviously enough, not all teachers are equal. But how do we know which ones are any cop? Well, we just do, don't we? Everyone in a school community tends to know who's doing a decent job. But how do we know? Rightly, most school leaders feel it important to evaluate the effectiveness of their staff, but how can they go about this in a way that's fair, valid and reliable?

Over the past year or so I've spent a fair bit of time explaining why lesson observation cannot be used to evaluate effective teaching. Mostly, the message has been received and understood. Even Ofsted got me to redraft the Quality of Teaching Section of the current Inspection Handbook to make it clear what can and can't be achieved in lesson observations. But how then, I'm often asked, should schools judge the effectiveness of their staff?It's a good question. My initial response was to say, look at the data: if a teacher's exam results are good then whatever the teacher is doing must also be good. This is beguilingly simplistic and I've come to understand that this is only slightly preferable as a proxy than lesson grades. You see, exam results are achieved by children. We see correlation and are fooled into believing it is causation. This is the Input/Output Myth. Students' performance tells us relatively little about what a teacher has done. There are some children who will not make progress whatever you do and some who will fly despite you. Teaching is leading the horse to water; learning is having a drink.

Teaching input only really results in proxies; well-behaved classes; lots of work in books; homework in on time; reams of marking etc. Students have to do the learning all by themselves. As Graham Nuthall put it,

Student learning is a very individual thing. Students already know at least 40-50% of what teachers intend them to learn. Consequently they spend a lot of time in activities that relate to what they already know and can do. But this prior knowledge is specific to individual students and the teacher cannot assume that more than a tiny fraction is common to the class as a whole. As consequence at least a third of what a student learns is unique to that student, and the rest is learned by no more than three or four others.Teacher input does not inexorably lead to student output. Jack Marwood explains further:

Teachers can teach, and some children will not learn. Yes, children may engage in activities which a teacher has prepared for them - often at painstaking length, taking into account their current knowledge, skills and understanding, their age, aptitude, learning preferences, social needs and cultural heritage, and so on, and on, and on. But children will react to teaching in their own individual way. Sometimes children are keen to learn, wanting to please themselves, their friends, their families – someone, anyone. Sometimes children do not feel this way. Learning is at times hard, unpleasant and pointless. It always requires effort. It matters little whether a child’s teacher is a subject specialist, or a subscriber to progressive or traditional theories of education, or dull, or interesting, or any of the other myriad of descriptions of teachers. It does not matter if the teacher teaches in a way which outside observers identify as ‘good’ or ‘outstanding’ teaching.No one thinks teaching has no effect on student outcomes, but quantifying it is extraordinarily difficult. Nick Rose quotes Daniel Muijs from the School Improvement & Effectiveness Research Centre at Southampton Education School as claiming, "teaching probably only accounts for around 30% of the variance in such outcome measures." It's really worth reading Nick's summary of Muiji's ideas on developing teaching. He concludes that student value-added measures, used carefully with other measures might allow us to reliably judge teacher effectiveness, but at a considerable cost:

It may be possible, through a rigorous application of some sort of combination of aggregated value-added scores, highly systematised observation protocols (Muijs suggested we’d need around 6-12 a year) and carefully sampled student surveys to give this summative judgement the degree of reliability it would need to be fair rather than arbitrary. Surely the problem is that for summative measures of effective teaching to achieve that rigour and reliability they would become so time-consuming and expensive that the opportunity costs would far outweigh any benefits.So, if you can't rely on lesson observations or results, how can you judge teacher effectiveness? Rob Coe et al put a lot of thought into this question when the wrote the Sutton Trust report, What Makes Great Teaching. This does a pretty good job of telling us what great teaching is, but does less well on how we might measure it. They suggest seven methods for evaluation:

- classroom observations, by peers, principals or external evaluators

- ‘value-added’ models (assessing gains in student achievement)

- student ratings

- principal (or headteacher) judgement

- teacher self-reports

- analysis of classroom artefacts

- teacher portfolios

...observed degrees of students' initiative, self-direction, and expression are ultimately used to determine a teacher's level of effectiveness on Danielson's 'Engaging Students' scale. In other words, for a teacher's rating to progress up and up through this domain element's levels of performance, students must be observed exercising more and more control of the classroom environment. (If we take this to a logical conclusion, the perfect Danielson teacher is one who does no actual teaching whatsoever.) ... the message is clear: while all teachers are expected to bring their students into learning, great teachers involve students by getting them to help determine matters of behaviour, learning activities, content, and all the rest - while never, of course, losing control of the classroom.We've been there before, haven't we? What about value-added measures? Apart from the critique above, the report finds them problematic too:

Gorard, Hordosy & Siddiqui (2012) found the correlation between estimates for secondary schools in England in successive years to be between 0.6 and 0.8. They argued that this, combined with the problem of missing data, makes it meaningless to describe a school as ‘effective’ on the basis of value-added.So, could we rely on student ratings? Most teachers will recoil in horror at this idea; there's the very real danger that this would quickly turn into a popularity contest as often happens in Higher Education with the 'coolest' profs getting the best ratings. The report doesn't really reach a judgement but does cite research that suggests school students "responded to the range of items with reason, intent, and consistent values". However, it also states that "student ratings of teacher behavior are highly correlated with value-added measures of student cognitive and affective outcomes". I'll just bet they are! So, students who are performing well and feeling good rate their teachers highly. No surprises there.

My view is that student evaluations can be a useful self-improvement tool, but provide weak evidence of teacher effectiveness. For interesting ideas on how student ratings could be used effectively, take a look at Nick Rose's excellent blog.

Of the remaining methods, the analysis of classroom artefacts (students' work) and teacher portfolios are interesting ideas but difficult to implement. Teacher self-assessment is unlikely to gain much traction - Yeah, I think I'm doing great actually, thanks for asking.

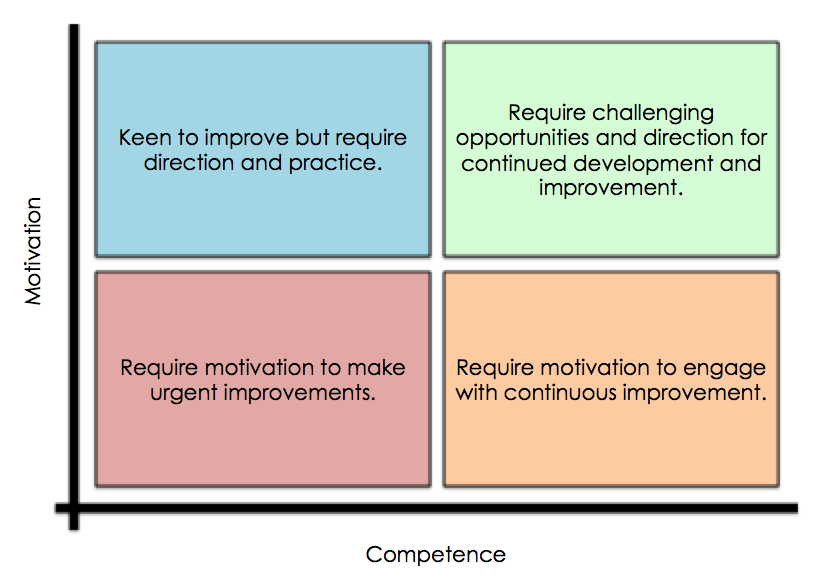

This leaves us with Headteacher evaluations. The report isn't particularly keen, but this is where I think we should place our bets. You see, any half-way decent Head has a pretty good idea of the quality of her teachers. She knows who the high flyers are and she is most certainly aware of who is causing concern. Every Head I've asked has been able to reel off their Top 3 and Bottom 3 almost without pause for thought. As I've shared before, heads find it easy to place staff into this matrix:

Observations and data trawls obscure that fact that we just know.

Observations and data trawls obscure that fact that we just know.But hang on, can we really trust Head Teachers' subjective, value-ridden judgements? Can we really trust them not to play favourites and fall victim to cognitive bias? No, of course we can't. Kev Bartle makes an excellent point in the comments below that actually Heads should be trusting their senior and middle leaders to form these judgements. We are all only human and it's all too human to err. But then, there are no valid and reliable objective measures of teachers' effectiveness - this at least makes no pretence. The trick, I think, is to make these judgements more transparent and to make leaders accountable for their evaluation process.

What I think we should do is find a set of questions leaders could use to give shape to their subjective judgements. I confess I'm not sure what these questions should be, but I set a challenge to Steve Higgins, one of the co-authors of the Sutton Trust report, to come up with questions which would force evaluators to really drill into precisely why they feel teachers are effective or ineffective. While he thinks about that, what do you think of these?

- What is the teacher's attendance and punctuality like? If there are concerns, are there any mitigating factors?

- Does the teacher follow school policies on uniform, standards of behaviour, professionalism etc.?

- Does the teacher collaborate with other staff?

- What is the opinion of teacher's line manager and colleagues?

- Does the teacher take part in extra-curricular activities?

- Does the teacher 'add value' to the school in any other way?

- Are you confident in the teacher's classroom performance? How do you know?

- What actions has the teacher taken to develop professionally?

- Are students confident in the teacher's performance? How do you know?

- Are parents confident in the teacher's performance? How do you know?

- What are their results like? How do they compare to other teacher's results?

- What are their students' books like? Does it look like students are making progress?

- What does the teacher's classroom look like?

- What does the teacher make of their own performance?

- Do you like the teacher? Why? [This is to interrogate biases in the evaluator.]

And of course, we should have a similar set of questions to guide governor's evaluations of Heads. All of this depends on the Headteacher to trust teachers and leaders to be professionals. If schools are led by a weak head, all is lost.

Anyway, this is just an idea. I think it has legs, but I'd appreciate thoughts and critique. Image courtesy of Shutterstock

The Learning Spy Substack is a sharp, provocative dispatch from the front lines of education, where ideas are tested, myths are challenged, and nothing is taken for granted.

Join me on Substack