Can we trust the evidence of our own eyes?

On the face of it, this would appear to be self evidently true. Why bother testing the efficacy of something we can 'see' working? Well, as I've pointed out before, we are all victims of powerful cognitive biases which prevent us from acknowledging that we might be wrong. Here's a reminder of some of these biases:

- Confirmation bias - the fact that we seek out only that which confirms what we already believe

- The Illusion of Asymmetric Insight - the belief that though our perceptions of others are accurate and insightful, their perceptions of us are shallow and illogical. This asymmetry becomes more stark when we are part of a group. We progressive see clearly the flaws in traditionalist arguments, but they, poor saps, don’t understand the sophistication of our arguments.

- The Backfire Effect - the fact that when confronted with evidence contrary to our beliefs we will rationalise our mistakes even more strongly

- Sunk Cost Fallacy - the irrational response to having wasted time effort or money: I’ve committed this much, so I must continue or it will have been a waste. I spent all this time training my pupils to work in groups so they’re damn well going to work in groups, and damn the evidence!

- The Anchoring Effect - the fact that we are incredibly suggestible and base our decisions and beliefs on what we have been told, whether or not it makes sense. Retailers are expert at gulling us, and so are certain education consultants.

In addition to all of these psychological 'blind spots' we are also possessed of physiological blind spots. There are things which we, quite literally, cannot see. Due to a peculiarity of how our eyes are wired, there are no cells to detect light on the optic disc – this means that there is an area of our vision which is not perceived. This is called scotoma. But how can that be? Surely if there was a bloody great patch of nothingness in our field of vision, we’d notice, right? Wrong. Cleverly, our brain infers what’s in the blind spot based on surrounding detail and information from our other eye, and fills in the blank. So when look at a scene, whether it’s a static landscape or a hectic rush of traffic, our brain cuts details from the surrounding images and pastes in what it thinks should be there. For the most part our brains get it right, but then occasionally they paste in a bit of empty motorway when what’s actually there is a motorbike!

Maybe you’re unconvinced? Fortunately there’s a very simple blind spot test:

R L

Close your right eye and focus your left eye on the letter L. Shift your head so you’re about 24cm away from the page and move your head either towards or away from the page until you notice the R disappear. (If you’re struggling, try closing your left eye instead.)

So, how can we trust when our perception is accurate and when it’s not?

Worryingly, we can’t.

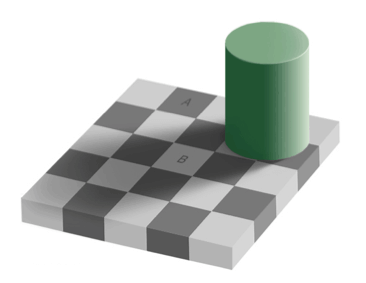

On top of this we also fall prey to compelling optical illusions. Take a look at this picture:

Contrary to the evidence of our eyes, the squares labelled A and B are exactly the same shade of grey. That’s insane, right? Obviously they’re a different shade. We know because we can clearly see they’re a different shade. Anyone claiming otherwise is an idiot.

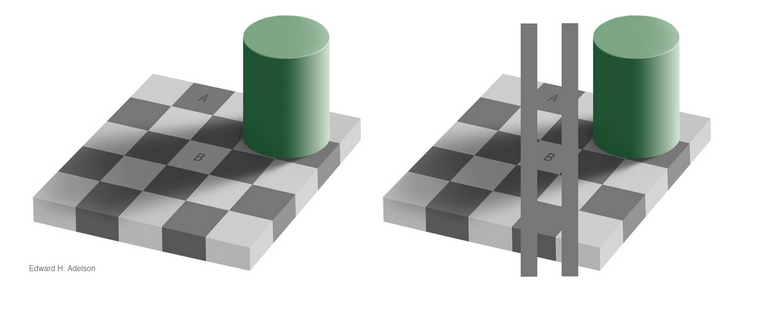

Well, no. As this second illustration shows, the shades really are the same shade.

How can this be so? Our brains know A is dark and B is light, so therefore we edit out the effects of the shadow cast by the green cylinder and compensate for the limitations of our eyes. We literally see something that isn’t there. This is a common phenomenon and Katherine Schultz describes illusions as “a gateway drug to humility” because they teach us what it is like to confront the fact that we are wrong in a non-threatening way.

How can this be so? Our brains know A is dark and B is light, so therefore we edit out the effects of the shadow cast by the green cylinder and compensate for the limitations of our eyes. We literally see something that isn’t there. This is a common phenomenon and Katherine Schultz describes illusions as “a gateway drug to humility” because they teach us what it is like to confront the fact that we are wrong in a non-threatening way.

So should we place our trust in research or can we trust our own experiences? Well, maybe. Sometimes if it walks like a duck and sounds like a duck, it's a duck. But we're often so eager to accept that we're right while others must be wrong that it's essential for anyone interested in what's true rather than what they prefer to take the view that the more complicated the situation, the more likely we are to have missed something.

Sometimes when it looks like a duck it’s actually a rabbit.

It is however entirely reasonable to ask for stronger evidence when findings conflict with common sense and our direct observations. The burden of proof should always be with those making claims rather than with those expressing quite proper scepticism.

Katherine Schultz says that our obsession with being right is "a problem for each of us as individuals, in our personal and professional lives, and... a problem for all of us collectively as a culture." Firstly the "internal sense of rightness that we all experience so often is not a reliable guide to what is actually going on in the external world. And when we act like it is, and we stop entertaining the possibility that we could be wrong, well that's when we end up doing things like dumping 200 million gallons of oil into the Gulf of Mexico, or torpedoing the global economy. Secondly, the "attachment to our own rightness keeps us from preventing mistakes when we absolutely need to, and causes us to treat each other terribly." But an exploration of how and why we get things wrong is "is the single greatest moral, intellectual and creative leap [we] can make."

Here she is talking about being wrong:

[ted id=1126]

The Learning Spy Substack is a sharp, provocative dispatch from the front lines of education, where ideas are tested, myths are challenged, and nothing is taken for granted.

Join me on Substack