Is it possible to get assessment right?

May 23, 2015

No.After my last blog on how to get assessment wrong, various readers got in touch to say, OK smart arse, what should we do?

Well, I'm afraid the bad news is that we'll never get assessment right. Or at least, it's impossible for assessment to give us anything like perfect information on student's progress or learning. We can design tests to give us pretty good information of students' mastery of a domain, but as Amanda Spielman, chair of Ofsted said at researchED in September, the best we can ever expect from GCSEs is to narrow student achievement down to + or - one whole grade! We could improve this huge margin of error, but only if we were willing for students to sit 114-hour exams.

As Daniel Koretz makes clear in Measuring Up, a test can only ever sample what a student is able to do. And while metrician's work very hard to design tests which provide as representative a sample as they are able, they are confounded by a seemingly insurmountable confounding factors, particularly the wording of and rubrics and the attitudes of test takes and teachers.

So, we can never design a perfect assessment system. Of course we can (and should) try, but we need to know we're using a metaphor to map a mystery. The mystery is the imponderable complexity of what's going on in students' brains; the metaphor is our feeble attempts to define and describe what learning looks like.

So, with this in mind, can we design assessment systems that are at least useful?

Again, that depends. It mainly depends on what we're intending to use assessment for. For all their faults, national tests, are useful in that they give a broadly understandable and comparable approximation of a student's effort and ability to master a domain of knowledge. This is a necessarily blunt measure, but we all agree to ignore all but the grossest errors because it's the best we can do. We might like 'em, but exams are a pretty reliable proxy of how well a student has done at school. To that end, SATs, GCSEs and A levels are flawed but workable means to compare how schools are performing.

But we also want to use assessment systems for other, more formative purposes. We use them to report progress to parents, share information with students and inform decisions about teaching and curriculum design. Can we design assessment systems that help us do these things?

We can, but they're not going to be very good. The only real choice we get to make is how bad they're likely to be. One way to go is to follow in the footsteps of Trinity Academy's Mastery Pathways. This is a system of assessment inextricably intertwined with curriculum and teaching, where the basic foundational skills and knowledge are taught first and then repeated until students have proved they have mastered them. I can really see how this works in a subject like mathematics: students learn an elementary foundation, take a test then either repeat the stage or progress on to a slightly more advanced content which depends on the mastery of the previous stage.

So, after the first block of content in Year 7 - Elementary 1 - students have to prove they can:

- Multiply and divide numbers by 10, 100 and 1000 including decimals.

- Recognise and use square and cube notation.

- Long multiplication of upto 4 digit numbers by 1 or 2 digit numbers.

- Short division, upto 4 digits by 1 digit (including remainders).

- Short division, up to 4 digit by 2 digit where appropriate.

- Understand different ways to represent remainders.

- Recognise mixed numbers and improper fractions.

- Convert between mixed numbers and improper fractions.

- Read, write, order and compare numbers up to three decimal places.

- Read and write decimal numbers as fractions.

- Use common factors to simplify fractions; use common multiples to express fractions in the same denomination.

- Multiply proper and improper fractions by an integer.

- Round a number with two decimal places to a whole number and 1 decimal place.

- Work with co-ordinates in all 4 quadrants.

- Express one quantity as a fraction of another, where the fraction is less than one or greater than one.

One way around is to follow Michaela's model where students follow a sequenced curriculum where English and humanities support each other by beginning in the ancient world with each subsequent topic building on the knowledge already gained. In this model students take weekly knowledge tests where the aim is to achieve 100%. Progression is determined on whether or not students have retained lesson content. Take a look at Joe Kirby's blog for more details.

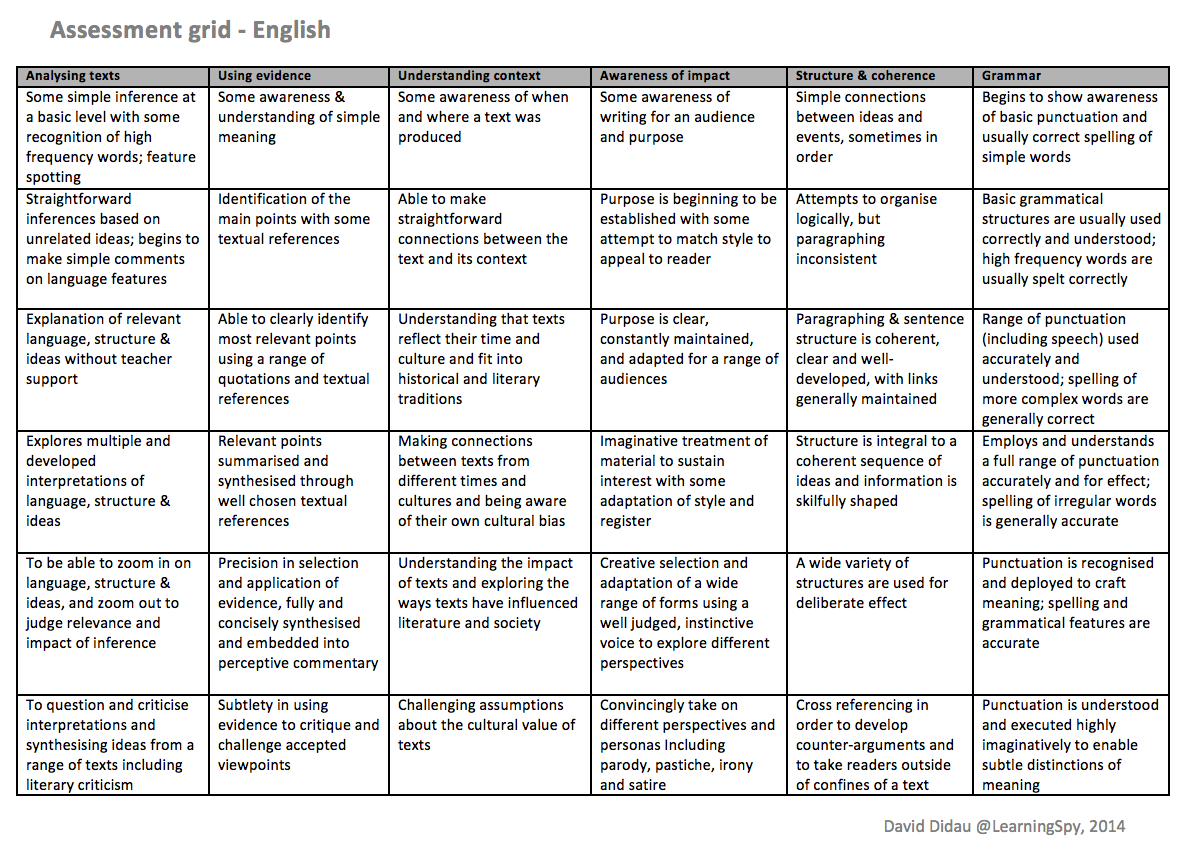

Another way to go, is to use threshold concepts in an attempt to anticipate where students are likely to get stuck and help guide them through liminal space. Here's my example of a Key Stage 4 English curriculum designed along these lines. This is all very well, but how can you ever hope to assess how students are progressing through these thresholds? My current answer is to describe what the journey might look like. This is a path fraught with danger. Daisy Christodoulou makes clear that performance descriptors are, in the main, a nonsense. As she says,

The problem is not a minor technical one that can be solved by better descriptor drafting, or more creative and thoughtful use of a thesaurus. It is a fundamental flaw. I worry when I see people poring over dictionaries trying to find the precise word that denotes performance in between ‘effective’ and ‘original’. You might find the word, but it won’t deliver the precision you want from it. Similarly, the words ’emerging’, ‘expected’ and ‘exceeding’ might seem like they offer clear and precise definitions, but in practice, they won’t.And of course, she's right. You can never hope for precision from performance descriptors, but then precision will always be impossible to achieve. Maybe what you can get from performance descriptors is narrative and meaning. Here's the metaphor I've been using to map the mysteries of learning English as an academic subject:

Of course, this is not precise. Although some care has been put into making the descriptors as meaningful as possible, it gives us only the vaguest hints at what students might be able to do as they 'progress' through the six thresholds, but what it might provide is a means of discussing what it means to be stuck, and describing what students might be able to do in the future. It has been designed with a particular curriculum in mind and so should not be taken as something able to stand alone, but even so, it should be seen as not so much a map as a travel guide - pointing out interesting sights and potential places of interest along the way. My idea is that students can be given a 'performance graph' at various points within the curriculum to provide a snapshot of some of the things they can do now. Maybe over three different assessment points you might get something like this:

Of course, this is not precise. Although some care has been put into making the descriptors as meaningful as possible, it gives us only the vaguest hints at what students might be able to do as they 'progress' through the six thresholds, but what it might provide is a means of discussing what it means to be stuck, and describing what students might be able to do in the future. It has been designed with a particular curriculum in mind and so should not be taken as something able to stand alone, but even so, it should be seen as not so much a map as a travel guide - pointing out interesting sights and potential places of interest along the way. My idea is that students can be given a 'performance graph' at various points within the curriculum to provide a snapshot of some of the things they can do now. Maybe over three different assessment points you might get something like this: If you prefer, we could try to show all this on one table:

If you prefer, we could try to show all this on one table: These graphs might be useful for students and parents as a starting point in a conversation about how the journey seems to be going. As long as you prevent yourself from be fooled into thinking they provide proof of anything or that you can ever be certain about what a students is learning, I think this system 'works'.

These graphs might be useful for students and parents as a starting point in a conversation about how the journey seems to be going. As long as you prevent yourself from be fooled into thinking they provide proof of anything or that you can ever be certain about what a students is learning, I think this system 'works'.To sum up, I think there are three main points I'd like to make about assessment:

- It will always be imprecise.

- Assessment should never be a substitute for the actual work students produce.

- Assessment should never be used to make judgements on anything as complex as learning or progress, and should only be used to make judgements on achievement or attainment when left to the experts at the end of Key Stages.

The Learning Spy Substack is a sharp, provocative dispatch from the front lines of education, where ideas are tested, myths are challenged, and nothing is taken for granted.

Join me on Substack